While there has been an explosive growth in public cloud adoption due to the COVID‑19 pandemic, enterprises are also embracing hybrid cloud, where they run workloads in both public clouds and on premises (in a private data center, say).

This hybrid approach enables enterprises to deploy their workloads in environments that best suit their needs. For example, enterprises might deploy mission‑critical workloads with highly sensitive data in on‑prem environments while leveraging the public cloud for workloads such as web hosting and edge services like IoT, which require only limited access to the core network infrastructure.

When you run workloads on premises, you can further choose between running them on bare metal or in virtual (hypervisor) environments. To help you determine the optimal and most affordable on‑premises solution that satisfies your performance and scaling needs, we provide a sizing guide that compares NGINX performance in the two environments.

In this blog we describe how we tested NGINX to arrive at the values published in the sizing guide. Because many of our customers also deploy apps in Kubernetes, we also step through our testing of NGINX Ingress Controller on the Rancher Kubernetes Engine (RKE) platform, and discuss how the results compare with NGINX running in traditional on‑premises architectures.

Testing Methodology

We used two architectures to measure and compare the performance of NGINX in different environments. For each architecture, we ran separate sets of tests to measure two key metrics, requests per second (RPS) and SSL/TLS transactions per second (TPS) – for details, see Metrics Collected.

Traditional On‑Premises Architecture

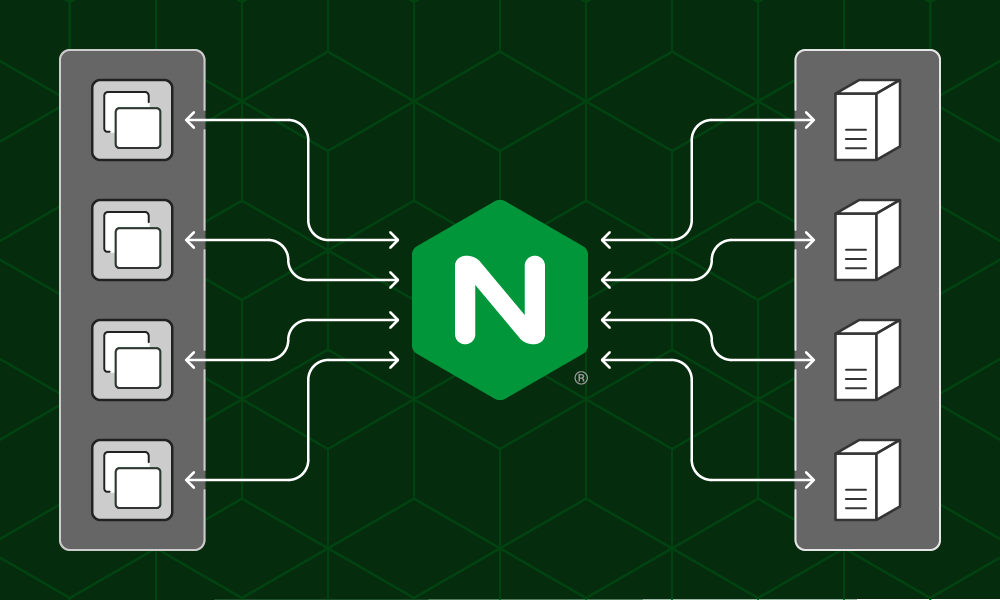

NGINX was the system under test (SUT) in two conditions: running on bare metal and in a hypervisor environment. In both conditions, four Ixia clients generated requests, which NGINX load balanced to four Ixia web servers. The web servers returned a 128-byte file in response to each request.

We used the following software and hardware for the testing:

- The Ixia clients and web servers were deployed using the IxLoad software on Axia Xcellon-Ultra™ XT80v2 application blades.

- For the SUT in both conditions, CentOS 7 was the operating system on PowerEdge servers with Intel NICs.

- The hypervisor was VMWare ESXi version 7.0.0.

Download the NGINX configuration files that were used to deploy NGINX in the testing environment.

Kubernetes Architecture

The SUT was NGINX Ingress Controller (based on NGINX Open Source) running on the Rancher Kubernetes Engine (RKE) bare‑metal platform. Four Ixia clients generated requests, which NGINX Ingress Controller directed to the backend Kubernetes deployment, a web server which returned a 1-KB file in response to each request.

We used the following software and hardware for the testing:

- The Ixia clients, deployed on Axia Xcellon-Ultra XT80v2 application blades with the IxLoad software, generated requests. The Ixia web server was not required because we were testing workloads in a Kubernetes environment.

- The operating system for both the SUT and the node hosting the backend application was CentOS 7. The SUT and backend application ran on two separate PowerEdge server nodes with Intel NICs.

- The NGINX Ingress Controller version was 1.11.0.

Download the YAML files that were applied to deploy the NGINX Ingress Controller on bare‑metal RKE.

Metrics Collected

We ran tests to collect two performance metrics:

- Requests per second (RPS) – The number of requests NGINX can process per second, averaged over a fixed period. In this case requests from the Ixia client used the http scheme.

- SSL/TLS transactions per second (TPS) – The number of new HTTPS connections NGINX can establish and serve per second. In this case requests from the Ixia client used the https scheme. We used RSA with a 2048-bit key size and Perfect Forward Secrecy.

The Ixia client sent a series of HTTPS requests, each on a new connection. The Ixia client and NGINX performed a TLS handshake to establish a secure connection, then NGINX proxied the request to the backend. The connection was closed after the request was satisfied.

Performance Analysis

The tables in the next section report the number of RPS and SSL TPS achieved with different numbers of CPUs available to NGINX in both the traditional and Kubernetes architecture.

Traditional On‑Premises Architecture

The performance of NGINX on bare metal increases linearly until the number of available CPUs reaches eight. We couldn’t test larger number of cores because the Ixia clients could not generate enough requests to saturate the SUT (reach 100% CPU utilization) when there were 8 cores or more.

We found that virtualization worsens performance by a small, but measurable amount compared to bare metal. CPU instructions in a hypervisor require more clock cycles than the same instructions on bare metal, which induces additional overhead.

| Hardware Cost | CPU Cores | RPS – Bare metal | RPS – Hypervisor | SSL TPS – Bare metal | SSL TPS – Hypervisor |

|---|---|---|---|---|---|

| $750 | 1 | 48,000 | 40,000 | 800 | 750 |

| $750 | 2 | 94,000 | 75,000 | 1,600 | 1,450 |

| $1,300 | 4 | 192,000 | 132,000 | 3,200 | 2,900 |

| $2,200 | 8 | 300,000 | 280,000 | 5,200 | 5,100 |

Kubernetes Architecture

The performance of NGINX Ingress Controller in Kubernetes scales linearly as we scale the number of cores to eight.

Comparing the results in the following table to those for a traditional architecture, we see that running NGINX in Kubernetes (as NGINX Ingress Controller) significantly worsens performance for network‑bound operations like serving requests (measured as RPS). This is due to the underlying container networking stack used for connection to other services.

On the other hand, we see that there is no performance difference between traditional and Kubernetes environments for CPU‑intensive operations like SSL/TLS handshakes (measured as TPS) – indeed TPS is slightly better in Kubernetes.

Additionally, we see a roughly 10% increase in TPS when we enable HyperThreading (HT).

| Cores | RPS | SSL TPS (RSA) | SSL TPS RSA with HyperThreading | Hardware Cost |

|---|---|---|---|---|

| 1 | 24,000 | 900 | 1,000 | $750 |

| 2 | 48,000 | 1,750 | 1,950 | $750 |

| 4 | 95,000 | 3,500 | 3,870 | $1,300 |

| 8 | 190,000 | 7,000 | 7,800 | $2,200 |

Conclusion

Here are key takeaways for helping you understand which environment your workloads will run best, and the performance implications for that chosen environment.

- If your application infrastructure is performing network‑bound operations (measured as RPS in our tests), then running NGINX in a traditional bare‑metal environment is optimal for performance. Running NGINX Ingress Controller in Kubernetes incurs the most significant cost in performance for network‑bound operations due to the underlying container networking stack used in the environment.

- Hypervisors introduce a small but measurable cost in performance for both network‑ and CPU‑bound operations (RPS is about 80% of the bare‑metal value).

- If your application infrastructure is performing CPU‑bound operations (measured as TPS in our tests), there is little to no performance difference between NGINX in traditional and Kubernetes environments.

- In our testing, hyperthreading improved performance by roughly 10% for parallelizable CPU‑bound operations like encryption.

Want to duplicate our testing or try NGINX and NGINX Ingress Controller in your environment? You can download NGINX Open Source and download the NGINX Open Source‑based NGINX Ingress Controller.